Nick SchuchSystem Operations Lead

In this blog post we will take you though all the components required to provision a high availability Drupal 8 stack on Microsoft Azure. This is an extract from the demonstration given at Microsoft Ignite on the Gold Coast in November 2015.

Drupal is content management software. It's used to make many of the websites and applications you use every day. Drupal has great standard features, like easy content authoring, reliable performance, and excellent security. But what sets it apart is its flexibility; modularity is one of its core principles. Its tools help you build the versatile, structured content that dynamic web experiences need.

aGov is a totally free, open source Drupal CMS software distribution developed specifically for Australian Government organisations. Easy to install and configure, this pre-packaged Drupal CMS complies with all Australian Government standards and provides a full suite of essential website management features. It can be used out of the box for basic websites, or customised with any standard Drupal 8 module to deliver large scale, complex government platforms.

To replicate this demo you will need to following tools installed:

So why do we want a high availability (HA) architecture? Why can’t we just deploy a single Virtual Machine (VM) application? Both of these questions come down to Azure’s service level agreements (SLA), to get a 99.95% SLA on Azure you will need to implement an architecture which implements 2 hosts at a minimum. But don’t fear, because our site is deployed in a high availability architecture doesn’t mean we have to make things difficult. For this demo we are going to stick to the following 3 principles:

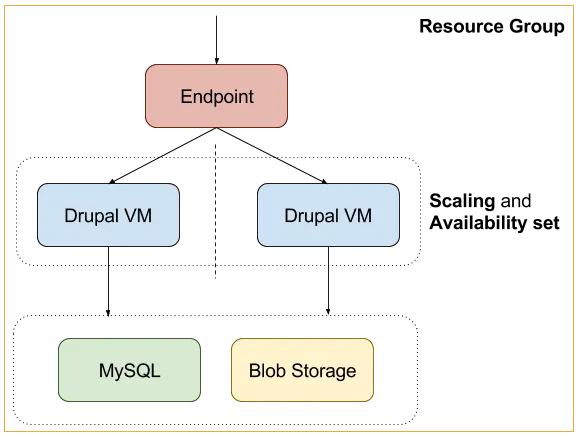

The diagram below illustrates the areas which we are going to focus on for this demo and how they interact.

Resource Groups

Azure is very focused on providing the high level tooling for deploying applications, without the requirement for the end user to know anything about the low level architecture eg. networking and replication. The core fundamentals that resource groups provide us are:

So how do I create a resource group? These are easily created via the Azure UI when creating new resources. For the purpose of this demo we will create one at the same time we create our database backend, then add resources to it via the same UI as we create additional resources.

The database is the primary storage backend for persistent data of the application, this component is responsible for storing all our content. Drupal 8 core ships with database drivers for Mysql, Postgres and Sqlite. In this demo we are going to use a Mysql backend implementation, on Azure we have 2 options:

For this demo we are going to use ClearDB, the reasons behind this are:

As your site grows you should have a discussion about which is a better backend for you. In the following video we are provisioning an Azure ClearDB Mysql backend. Here is some further reading for the database backends:

In conjunction with database storage, we also need file storage to make uploaded files available to all our application servers. If only we had a service which we could use as a drop in file storage backend, actually, we do! Azure Files to the rescue. Azure files provides us with a service for mounting scalable backend storage over the SMB 3.0 protocol. To setup Azure Files we need to:

The video below demonstrates the setup of a file share, these details will get used later on in the demo.

PreviousNext have done all the hard work for deploying aGov on Azure, we have done this by shipping images to the VM Depot [a][b](https://vmdepot.msopentech.com/List/Index). To achieve this, PreviousNext leverage Packer by HashiCorp, and a custom provider from Microsoft:

With these tools combined we can provision and “bake” aGov images for you to leverage in your deployments. If you wish to extend the image that can be done by forking our open source project.

For your convenience we ship 2 flavours of this image:

For this demo we will only be using the HA image. In the following video we take you behind the scenes and provision a new image which we can add to the VM Depot.

So how do we deploy our image and wire them to our backend services? Custom Data to the rescue!

Custom Data allows us to inject a script that will be run at the time of provision of each host. Here we have an example script which:

Create a new script with the name custom-data.sh with the following contents:

#!/bin/bash # Changing The Storage Location of the Sync Directory: https://www.drupal.org/node/2431247 setenv AGOV_DIR_CONFIG_SYNC "sites/default/files/config_/sync" setenv AGOV_HASH_SALT "" setenv AGOV_DB_HOST "" setenv AGOV_DB_NAME "" setenv AGOV_DB_USER "" setenv AGOV_DB_PASS "" # Environment variables will take effect now that Apache has been restarted. sudo /etc/init.d/apache2 restart # Persistent storage for sharing between hosts. mount-smb "" "/data/app/sites/default/files" 33 33 0777 0777 "" ""

You will reference this script in the next section, so take note of it’s location. So now we are at the stage where we want to deploy these applications, this is where the Azure CLI comes in.

We chose to use the Azure CLI for this demo so you could reproduce the same results via drop in scripts. If you still need to install the Azure CLI tools you can install these by the following 2 methods:

Using the Azure CLI and the following script we can provision 3 hosts and wire them to our backends. Here is a breakdown of the variables:

Create a new file called create-vms.sh with the following contents:

#!/bin/bash IMAGE=”vmdepot-61257-1-512” REGION='Australia East' USER=”” PASS=”” GROUP=”agov8-ha” AVAIL=”agov8-ha” # The location of your custom-data script. CUSTOM=”/home/nick/.azure/custom-data.sh” azure vm create --connect "$GROUP" -o "$IMAGE" -l "$REGION" "$USER" "$PASS" --availability-set="$AVAIL" --ssh 12345 --custom-data=$CUSTOM azure vm create --connect "$GROUP" -o "$IMAGE" -l "$REGION" "$USER" "$PASS" --availability-set="$AVAIL" --ssh 12346 --custom-data=$CUSTOM azure vm create --connect "$GROUP" -o "$IMAGE" -l "$REGION" "$USER" "$PASS" --availability-set="$AVAIL" --ssh 12347 --custom-data=$CUSTOM

When you run this script, you should see three new VMs being created in the output. In this video we are provisioning our hosts with the above script.

Now that we have provisioned our instances, we need to install the site. Here is an example set of command which will:

In contrast to the other scripts, the following are commands which you run on the command line one after the other.

# Connect to one of the hosts on the cluster. ssh @ -p 12345 # This is where the application is stored. $ cd /data/app # Install the application. $ CMD=”drush site-install agov -y --site-name=’aGov HA demo’ --account-pass=’’ agov_install_additional_options.install=1” $ sudo -E -u www-data /bin/bash -c "$CMD"

When you run this script, you will see a message showing Drupal was installed.

Endpoints are a networking abstraction which allow us to “poke holes” in the public ip and forward connections through to our VM instances running in the Resource Group.

Endpoints come in 2 types:

Here are some examples of using endpoints via the Azure CLI:

Single endpoint to access the “agov8-ha” host on port 8080

$ azure vm endpoint create agov8-ha 8080 $LOCAL

Balanced endpoint to access the “agov8-ha”, “agov8-ha-2” and “agov8-ha-3” hosts on port 80

Create a new script create-endpoints.sh with the following contents:

#!/bin/bash RULES=’80:80:tcp:10::tcp:80:/robots.txt:60:120:agov8-ha::’ azure vm endpoint create-multiple agov8-ha $RULES azure vm endpoint create-multiple agov8-ha-2 $RULES azure vm endpoint create-multiple agov8-ha-3 $RULES

The RULES variable is quite complex, here is a breakdown of the options we have passed into our balanced endpoint.

| Option | Value |

|---|---|

| public-port | 80 |

| local-port | 80 |

| protocol | tcp |

| idle-timeout | 10 |

| direct-server-return | |

| probe-protocol | tcp |

| probe-port | 80 |

| probe-path | /robots.txt |

| probe-interval | 60 |

| probe-timeout | 120 |

| load-balanced-set-name | agov8-ha |

| internal-load-balancer-name | |

| load-balancer-distribution |

When you run this script, you should see the endpoints being created successfully in the output. To verify this has run successfully, run the following command against one of the VMs:

$ azure vm endpoints list agov8-ha

This will return details on the endpoints which have been setup for the “agov8-ha” VM instance.

In this demo we setup a balanced endpoint on our application.

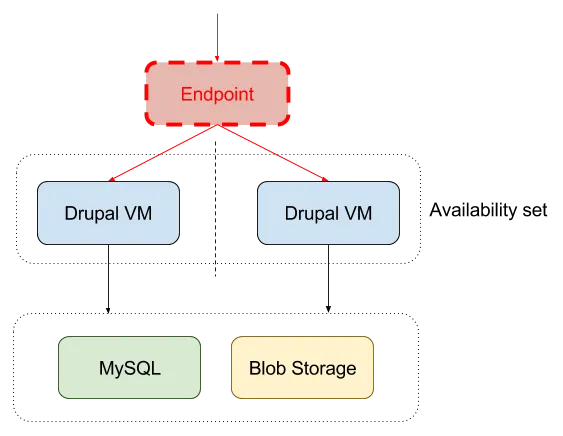

Congratulations! You have deployed a highly available Drupal 8 application on Microsoft Azure! But, your job is not complete, time to save costs with scaling. HA architectures on Azure are all about getting the 99.95% uptime and to get that SLA we require a minimum of 2 instances. In our current situation let’s assume are getting low traffic and we deployed 3 VM’s, we are losing money! To get our “bang for buck” we want our application to inherit the rules of:

Azure scaling gives us 2 options applicable for our application, those options are:

Notice I phases “turn on” and “turn off”, this is because your maximum and minimum application size is dictated by how many instances you have provisioned. However, don’t fear, you are not being charged for instances which are turned off, you are just saving them for later when you need them, preconfigured and ready to go.

In this demo we will scale our cluster down to 2 instances, while keeping a max size of 3.

Now that we have our application deployed we can start to think about how we manage it on an ongoing basis. Here are the conversations that you need to start having now that you are running a HA architecture. These are topics which warrant their own blog posts, for this post here are some productions as part of the Azure market place which will allow you do get a feel for these implementations:

In this demo blog post we have covered many topics which relate to deploying high availability architecture on Microsoft Azure, but this is not the end. As a follow up to this blog post I strongly recommend you look at how this type of architecture fits into your current Drupal deployments and where some of the gaps might be. If you have any further questions please reach out in the comments and let’s keep the conversation going.